If you’ve ever used Google Docs to think through an idea, a calculator to solve a problem, or ChatGPT to help you shape feedback, you already know something important:

You weren’t outsourcing your thinking.

You were thinking with a tool.

That idea sits at the heart of a powerful body of research known as the extended mind, which is the view that our thinking does not stop at the skull, but stretches out into the tools we use. A recent paper by José Hernández-Orallo (2025) applies this idea to artificial intelligence and asks a bold question: what happens to learning and assessment when AI becomes part of how we think?

This question matters deeply for teachers, because it challenges how we design lessons, how we set assignments and how we decide what “counts” as learning in the AI age.

First, a quick refresher: What is SAMR?

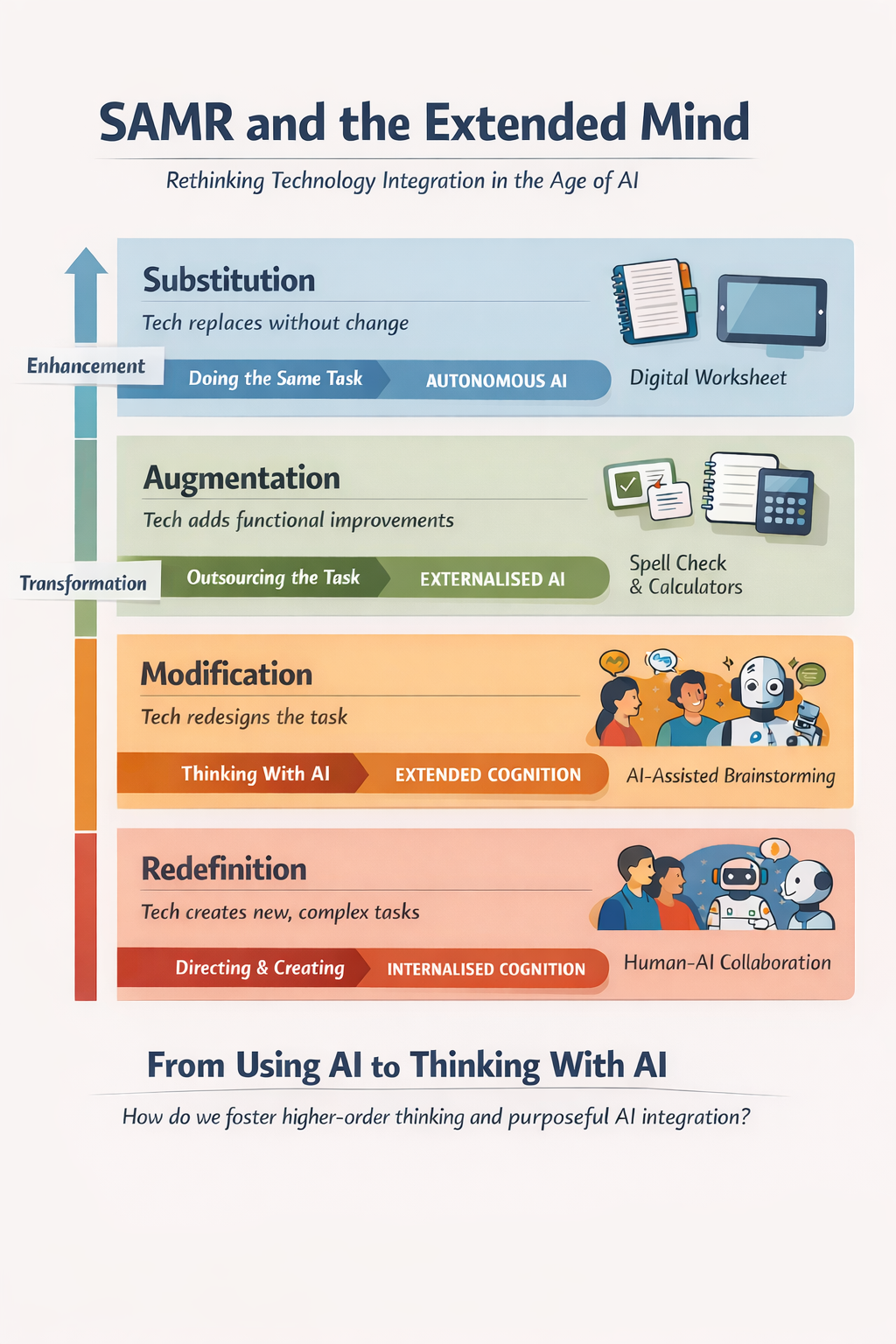

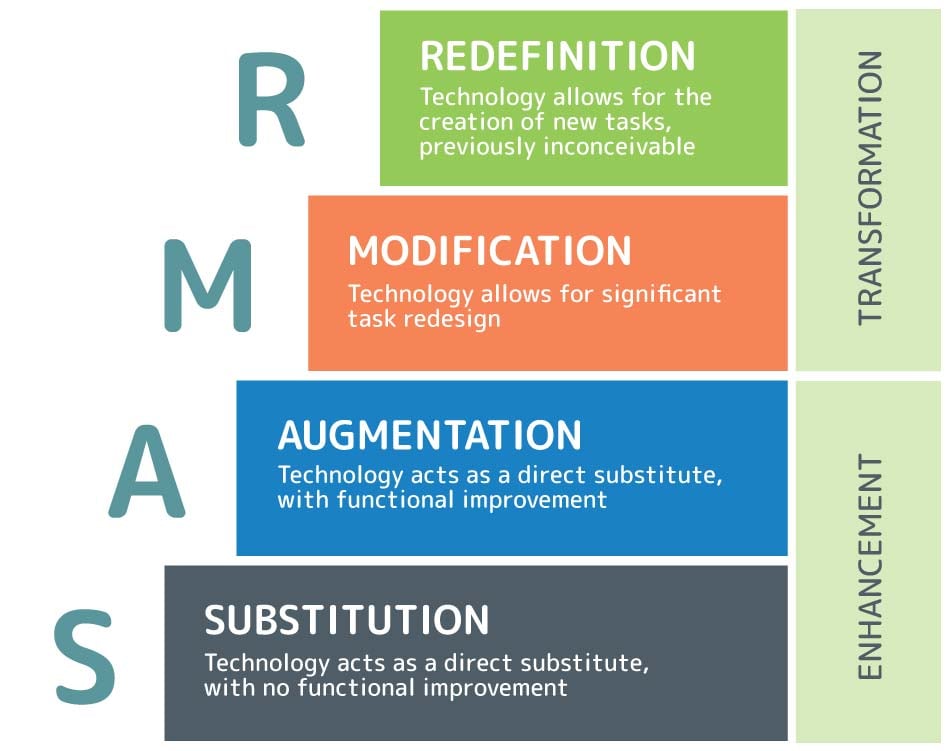

Many educators will already know the SAMR model, but if you don’t, it’s a simple way of describing how technology is used in learning:

At the bottom of the model, Substitution means technology replaces something without really changing the task, for example, typing an essay instead of handwriting it. Augmentation adds a bit of functional improvement, such as spell-check or grammar suggestions.

At the top of the model, things become more interesting. Modification means the task itself is redesigned, perhaps through collaborative editing or multimedia. Redefinition means technology enables entirely new kinds of learning that were not possible before (such as global collaboration, interactive simulations, or AI-supported design work).

SAMR has helped teachers for years to move away from simply digitising old tasks and toward more creative, transformative uses of technology.

But AI changes the game.

Why SAMR looks different in the age of AI

Hernández-Orallo makes a striking observation: most of what AI does in education doesn’t sit neatly at the bottom of SAMR. It tends to operate at the top, in modification and redefinition, because it changes how tasks are carried out in fundamental ways.

When a student uses AI to brainstorm, refine, compare, rewrite, question and critique ideas, the task is no longer just “writing an essay”. It becomes something closer to design, curation, sense-making and judgement. The thinking shifts from producing text to shaping, steering and evaluating ideas.

This is why AI feels so disruptive to traditional assessment. It doesn’t just make old tasks faster. It quietly redefines what the task is.

From “using AI” to “thinking with AI”

This is where the idea of the extended mind becomes so helpful.

The paper describes different ways people and AI can interact. Sometimes AI works on its own, doing a task instead of us. Sometimes it acts like a service we outsource part of the work to. But the most powerful form is when AI becomes tightly woven into our thinking – when we ask, refine, reject, explore and decide alongside it.

In those moments, AI is not a shortcut. It is a cognitive partner.

Just as a calculator does not make a mathematician less intelligent, but allows them to operate at a higher level, AI has the potential to extend what students can think about and how complex their ideas can become.

The danger is not that students use AI. The danger is that they use it without understanding or ownership of the decisions being made.

So what are we really trying to teach now?

If AI can write, calculate, summarise, generate images and even draft research, what is left for learners?

The answer from the research is clear: the centre of gravity shifts. What matters more is not producing the output, but directing the system.

Students increasingly need to know how to frame good questions, how to judge the quality of what comes back, how to notice when something feels wrong, and how to make ethical and contextual decisions about what should be used and what should not. They need to understand purpose, audience and consequence. These are not “soft skills”. They are the core of human agency in an AI-rich world

This is exactly why simply banning AI misses the point. It protects a version of learning that no longer reflects how thinking actually happens.

Why AI detection is the wrong battle

One of the most powerful ideas in the paper is that we are no longer really dealing with individuals doing tasks alone. We are dealing with human-AI teams.

Trying to separate what the student did from what the AI did is like trying to separate what your brain did from what your notebook did when you worked something out. What matters is not whether a tool was used, but whether the learner was in control of it.

That is why assessment needs to shift away from product-only evidence and toward process-rich approaches: explaining decisions, defending choices, showing iterations and demonstrating understanding. These are the kinds of things AI cannot fake for a student, because they require ownership of thinking.

What this means for classroom practice

If AI is becoming part of students’ extended minds, our role as teachers changes too. We become less like invigilators guarding against tools, and more like designers of thinking environments. We have to create tasks where AI use is expected, but shallow use is exposed.

This is where approaches like project-based learning, portfolios, oral explanation, live critique, and reflective design journals become powerful. They don’t just tolerate AI, they make students accountable for how they use it.

The paper describes the coming era as the Mechanocene – a time when human thinking is inseparable from machines. But that does not mean education becomes less human. It means it finally focuses on what only humans can really do: making meaning, exercising judgement, acting ethically and understanding the world.

In the AI age, learning is not about competing with machines.

It is about thinking with them — wisely.

References

Best, J. (2020). The SAMR Model | How to teach with tech | Introduction and examples. [online] 3P Learning. Available at: https://www.3plearning.com/blog/connectingsamrmodel/.

Hernández-Orallo, J. (2025). Enhancement and assessment in the AI age: An extended mind perspective. Journal of Pacific Rim Psychology, 19. doi:https://doi.org/10.1177/18344909241309376.